SRE Leaders Panel: Managing Systems Complexity

Originally published on Failure is Inevitable.

In our previous panel, we spoke about how to overcome imposter syndrome in high tempo situations, and how culture directly affects the availability of our systems. Building on that last discussion, we gathered leading minds in the resilience industry to discuss how SRE can manage systems complexity, and how that's tightly intertwined with business health especially in the context of current health and social crises.

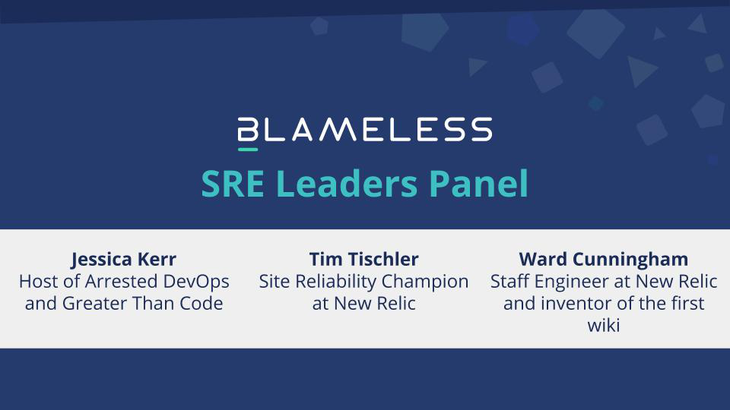

Our wonderful panelists were:

- Jessica Kerr, symmathecist and Host of Arrested DevOps and Greater Than Code

- Tim Tischler, Site Reliability Champion at New Relic and formerly CTO of Tripgrid

- Ward Cunningham, inventor of the world’s first wiki and Staff Engineer at New Relic

Read on to learn how systems complexity has evolved since the early days of Agile, what the future of testing will look like in surfacing systems boundaries, how to understand socio-economic issues through the lens of SRE principles like observability, and much more.

The following transcript has been lightly edited for clarity.

Amy Tobey: Hi everyone. I'm Amy Tobey. I'm staff SRE at Blameless, and I'll be your facilitator today. Our panelists here are all people I've been seeing around the resilience and software communities for a while now and to get them all in the same room is really exciting to me.

Jessica Kerr: Hi, I'm Jessica Kerr. I call myself a symmathecist in the medium of code. Where symmathecist refers to software and the teams that build it, our learning system is made of learning parts. I am part of the resilience engineering and DevOps and various language communities. Software is endlessly fascinating because it is endlessly complex and only getting more interesting as we get better at it.

Amy Tobey: Great. Which language do you love best right now?

Jessica Kerr: Right now, if I had a choice I use TypeScript and it's not that I love it the best, it's that I recognize that I need to try to be actually good at one of them, instead of doing all of them. But I also write Ruby and Java these days.

Tim Tischler: I'm Tim Tischler. I'm a site reliability champion at New Relic. I'm also in the masters program at Lund in human factors and system safety. For most of my career I’ve been an individual contributor on the Ops side of things, Ops then DevOps then SRE. The other half of my career has been more management of product-focused front-end efforts, and front-end UI teams, and things of that nature. I've kind of gone back and forth between the two tracks in my career. When I first found REdeploy and a lot of the systems safety and resilience engineering stuff a few years ago, I couldn't stop reading it and I've been doing that ever since.

Ward Cunningham: I’m Ward Cunningham. Most of what I know about resilience I learned from Tim. That was maybe two or three years ago now, we were at a company offsite and he handed me this Brown book and he said, “This is what I'm studying.” I read it and kind of recognized then the transition that computing had gone through. I've been through transitions before. Probably the most influential on me is when desktop computing became a thing. Before that computers were in big rooms and they had staff that could take 18 months to write a program, and that wasn't working on the desktop. The other thing is the people who had a lot of expectations that they couldn't quite articulate. This whole Agile software thing grew up around getting a team close to somebody who needed the software. We called the customer because we were getting paid because they wanted us to deliver software. But it was really more of a partnership with a division of responsibilities, so that became Agile.

I think it's played out pretty well. A lot of people asked me if I had it to do all over again, if I’d do it differently. Well, I might color balance the picture a little better but no, it's just right as it's ever been and will continue to be right, in the same way that Newton's laws are right and worth studying. But there's a lot more going on in the world now, and I think that's really the subject that'll be fun to get into.

Amy Tobey: Yeah, you mentioned transitions and brought up your role in the Agile movement. I want to talk about major transitions that have happened in software, and apply that horizontally. Mine is game development. I'm not a game developer, I'm an SRE, but I've been fascinated with games since I was a kid and kind of broke into my career via games. When I was a kid and there was Atari, those games were developed by hardware engineers who oftentimes knew assembly or how to build the chips for the cartridges and so on.

The complexity there was largely in the electrical engineering space, right? Now we're into these code bases of millions and millions of lines to bring up a simple 2D game. I think a lot in terms of how complexity has developed in that space over the last 20 to 40 years now. What's yours?

Jessica Kerr: I think of it in a way that software interacts with people so much more closely. Ward, as you were describing that transition, I was thinking about how when the computers were far away and they were in a room and you just didn't have the same expectations that you did when the computer is right in front of you. You can interact with it much more closely. Now our computers come around with our hands. The human computer systems are a lot more intertwined, more and more. These expectations that we can't articulate, even if we could, they'd immediately be wrong as soon as you deploy the software because the software it's not just clicking into an existing system and automating something that used to be paperwork, it's changing that system. It's changing the people that use it. It's immediately wrong because it's got like evolve and grow inside the system that it's now part of.

Amy Tobey: A moving platform trying to hit a lot of moving targets.

Jessica Kerr: We're creating the target at the same time that we're aiming for it.

Ward Cunningham: Well, I think what's most interesting is how hard you have to work to understand what's going on in our mathematical phase, either you could write the proof or you couldn't. In the Agile phase you had the test so you didn't. Now we're not sure how to tell what's going on. It's evolving very rapidly and a lot of it has to do with making sense of every surprise. I will say that I had this habit of going to conferences and people would tell me about their Agile project. Then they're telling me about all the Agile practices that they applied.

I say, “Well, what did you learn that you didn't expect to learn?” Then they'd say, “Yeah, that's a good question.” Then something comes to mind. That's fabulous. Instead of telling me how to do Agile, they tell me you didn't expect to learn as a power question.

Amy Tobey: For eliminating kind of that story of how things have changed over the years even.

Tim Tischler: To go along with you're talking about game development, it's interesting. Once upon a time, when you write a game, it’s for one user. It was a single person game, not on a network, and it was one person writing it on a computer and iterating. Then after that we got two teams of people working on an application that had a couple of moving parts, the end users of that were expected to be a small team in an office using a thing after it had been deployed.

Then after that, we got two teams working on systems that were moving so fast, that then opened up to the entire internet. Not only are your users just your users, they're also the hackers, they're also the criminals, they're also the bots, they're also like just this huge giant world out there. Knowing where the bounds of your system is or who's using your system, to what ends they're using it, is completely impossible, just totally impossible.

It's gone from looking downward into the complexity of the hardware going, “Okay, I have a mental model of how this works,” but tomorrow my mental model of my hardware will be the same because the hardware is the same, to where like, I have no idea where the bounds of the system are. Whatever we think we know of today, won't be the same because it will change tomorrow and the next day. It's never-ending change, it's an ocean of change and of contexts as far out as we can imagine.

Ward Cunningham: That is sort of a symbol of the success of information technology really, that it has become so important. It's not a curiosity anymore. I'm not sure we were fully prepared for that. But we wouldn't want to hope for less really.

Amy Tobey: I sense the theme about being unable to sense the boundaries of our systems. But we still have this thing that we do in our daily work, that we get paid to do. Where we have to define boundaries, right, a big part of our work as software engineers.

One of the things that we’ve discussed was how testing today maybe isn't living up to the expectations people have in terms of defining the boundaries of our systems, or protecting those boundaries. How does testing in your view help define those boundaries, and how do we start to create more confidence in these huge complex systems? When all four of us talk about this, we kind of have to talk about very abstract things to even grapple at this problem. But we can start with testing as one of the tools in our toolbox that we use to come at this problem. How do you see testing evolving in the next five years? In terms of dealing with complexity and making systems more predictable.

Tim Tischler: Something that's been nagging at me for about a decade now that is coming more into view.

In 2010 or 11, I would ask what's the difference between testing and monitoring.

Ops people would go, “What they're different?”

QA folks would go, “What they're different.”

Java developers would go, “Testing, monitoring what's that?”

But it's gone from unit tests to a good necessary improper and an important part of it. I think the testing pipeline just needs to keep extending out further and further into the living, breathing chaotic, complex moving system and knowing that our expectations of the moving system are being met.

It started out as the expectation of my little piece of code being met. Then we said, "Okay are the expectations of these things chained together being met?" We had integration tests. Then at some point people started going, “Wow, it's really hard to capture the right data in my integration tests and know that we're getting it, so let's move out into the production landscape.”

Then with chaos engineering and traffic shaping and canary deploys and SLOs and all of that, really isn't that just a kind of more multi-dimensional extension of the ideas that were originally in tests? It's hard to test an isolation of a living, actually working system; it has to be inside the system.

Amy Tobey: We're measuring out with so little certainty that we're trying to validate hypotheses that have so many angles on them.

Ward Cunningham: We couldn't build these systems if we couldn't test our code in isolation. I think that system designers accept some responsibility for designing the test automation as just part of their job. There's a lot of creativity there that has allowed us to build systems that work well enough. That's another case where testing as it was envisioned a decade ago is still valuable.

But Tim just ticked off four or five things that we've got to do on top of all that, if we really want to wield what we've built to make it do what we want. It's easy to have a system kind of get out of control to maybe where you're afraid to touch it -- that would be a sign that you can't wield it. Keeping that handle there is a new thing. Jessica was describing the interaction with the people and the systems; it's all woven together and it becomes more like sociology than mathematics.

Jessica Kerr: So Ward mentioned early on that one reason Agile started was these expectations that we can't quite articulate. There's levels that you can articulate your expectations. You can get a sub-system that you understand sufficiently, and you understand the hardware and its demands are stable enough that you can do mathematical proofs on it.

That's awesome because that's how you know it's going to meet your expectations, because you can articulate them, which is so hard. Then you get into tests where I can articulate some of my expectations. The part you're missing is everything else the system might do. Then you get into checks like property based testing where you're like, “Okay, well, what if I throw everything else that I think is valid?”

Then you get into security checks and those are all the other things you've never, why would any human do that? Well, because they're not a human, they're a bot or an attacker with the tool. It's hard to define everything you won't do.

As we go to wider and wider scales and recognize that there are way more possibilities than we could ever check…you’re never going to check all the possible cross site scripting attacks...you move from checking expectations to looking for surprise. When you get into Chaos engineering, or when you're talking about observability, you're looking for surprises. Then can you give yourself the tools to make sense of those? I like Ward’s term “wielding the system” by being able to make sense of that, and then changing the flow, changing how that system looks to that particular IP address, for instance.

Amy Tobey: That brings up observability and cast and line. The other one that I was thinking of as you were talking was about red teams trying to find boundaries, which is another part of what chaos engineering does.

Jessica Kerr: What is red team?

Amy Tobey: There's the old terminology of the white hat, black hat hacker, which has its own problems in the entomological space. But this new term got invented for the red teamer, which is basically people inside your own organization that test your perimeter on a regular basis. They might do things like social attacks or technical attacks against the front door, and keep coming at it to build resilience through a constant attack.

Ward Cunningham: When they actually find a way in, engineers will say, “Well, I didn't think that was my problem.”

Amy Tobey: But that's great. Because they've discovered a surprise. Now we get to react to it. Tim, I’m a red teamer who’s hacked into your system. You've gotten this news as an incident investigator, but what kind of questions are you starting to ask?

Tim Tischler: First it's understanding what you did and how you knew to do it and understanding the mindset that you were in: How did you know that this was a thing to attack and how did you know that it was successful? To be able to better understand the mindset of the people who are making the attacks. It leads into socio-technical: is it the people or the systems?

Here it goes to observability, how can we see that attack? How can we see what it was that happened? Are there dark spots in our observability, as we push observability out of the boundaries of just code into the networking layer and into the firewalls and into the databases and everywhere.

Then ask the question of the technical system. Can you show me this attack all the way through and how it worked? If you can't see it, it's really hard to reason about it and to search for it and to explore the space. I think they're asking questions of the humans and they're asking questions of the technology and you need both of them to understand the context.

Amy Tobey: I think one of the things that we do as incident investigators is try to take what we figure out there, and re-export it back into our organization, into the technical systems and internal people. Can you talk a little bit how you do that Tim?

Tim Tischler: The biggest challenge is how do you do that? I like Nora Bateson's idea of warm data: we love metrics, we love MTTR, we love SLAs, or we love severities. We love all of those nice clean metrics that executives can look at on reports and think that they know something and they do, there is value in those.

But it's so important to know the context that we pull data out of a context, turn it into a metric, but how do we export that context out, learn something and then put it back in the context.

One of the huge challenges is if you see one of these vulnerabilities, there's so many ways things could fail. Well, this only failed once and it's going to take three weeks to fix, do we fix it? Or was that really just a crazy one in a billion, these eight things came together to cause this, and this is going to be expensive to fix versus little small ones.

You can see this one contributing factor showing up again and again in lots of little tiny, small incidents. Well, let's go fix that one … That's one of the roots in all of these incidents, let's go fix that. But in order to be able to have the vision and the knowledge to see those, really involves knowing the context and the work is done and the details of enough of these incidents so that a human can generalize it and go, “Aha, look what I found across all of these.”

What I find is that those things don't show up in written retrospective summaries very often. You really need to do a deeper analysis in order to find that this certain restart storm always involves Ruby and always involves a particular version of rails. Aha, but once you find it and you can see that it's across a bunch of incidents, then you learn.

Amy Tobey: We can look at these macro scale things happening. Then we need to reason back to the lower level things that are contributing to it, and dial in on the highest value stuff to work on. Jessica mentioned “narrative”, which is really relevant; basically how to communicate across people.

Ward Cunningham: I think that one of the challenges we have is because our systems are bigger, that we have more people with their fingers in there. We organize them as teams and we expect them to help each other, but you'll learn something over here and it's very hard to communicate it over there because there's no context for even understanding the problem.

You can't help but become more specialized in your programming languages and so forth, like understanding these three subsystems in the way they interact in real time. This is an area where I think there's a lot of opportunity; if you learn something, you ought to write it down and share it.

But what you learn when you read something or when someone explains something to you, is a little different than when something happened and you pushed. When you take an action and operationally see the result of that, especially if the system doesn't break too quickly, you can push on it and see how it behaves.

We used to say in the early days of Agile: Take the thing you fear the most and do more of it. Back then we distributed software on little diskettes, and building the installer was the thing you did at the end of the project. If you waited till the end of the project, it was going to be a nightmare. But if you just wrote 117 and delivered that on an installer, that was kind of pointless. Your customer installs and it says, “Your application will appear here someday.” Then for the entire project, you're practicing every week, making another installer… But that activity is what creates the opportunity to learn. Having a chance for people to reach into the system and feel it work.

Amy Tobey: That kind of visceral experience that’s actually active in the system.

Ward Cunningham: That's right, and this is why when I hear observability, you say, “Well, I want to be able to look over here. I want to touch it and I want to see what happens.” If it gets rolled up into some throughput statistic, you can poke in a lot of places and the throughput doesn't change, but there could be something very important changing, and it's going to help you solve a problem someday.

Jessica Kerr: Regarding acting and learning, I've been reading a couple of papers and like with inactive ecology, I think about how as humans, we learn in order to act to make a decision on our next actions. Sometimes we act in order to learn. Acting and learning are naturally entwined in our brains. I can't get a good understanding of code unless I'm changing it. Even if I throw that refactor away, I need to get my fingers in it. The changing and learning … Changing the system and learning the system are like the same activity.

Ward Cunningham: Yeah, you get a more comprehensive mental model of what's possible. I like to savor my error messages. I'll write something stupid without even trying, and I get these crazy error messages and I say, “Oh, I wonder why the system said that.” Then late at night sometime when I'm trying to finish something I get that error message and say, “I know what that means. It doesn't mean what it says.”

Jessica Kerr: I know who wrote that.

Ward Cunningham: In constructivist education, you do projects aligned with your interest and your curiosity, and you learn a lot that way. It's common in private schools. Of course that thread goes way back and happened to drift into the learning research group at Xerox who built Smalltalk, and so much of my career has been imprinted on Smalltalk.

You got this mouse and you poke things. That system was wonderful in that if you wanted to know how to do something, you just did it and hit interrupt real quick. Then it gave you a little trace and it told you how to do it.

Amy Tobey: I could take a second to glow about my son, because I watch him do this all day. He and his friends get on Skype and play Minecraft and do exactly that. They build little machines with branches and IFs and ORs and stuff all through it. These huge levels with resets and templates that they can copy around. I'm watching him pick this up. I'm not teaching them a darn thing. I'm working, I'm a single parent, he's just sitting there just poking at the system, trying commands and just working his way toward this goal. It's really cool.

Ward Cunningham: Let's talk about a team, a group of people who own some part of the system and need to improve it. There is this tendency to say, “Well, I'll do whatever you want me to do, Boss.” Then you're giving up that opportunity to know your system better. You might know your boss better, but then there’s bureaucracy as your boss has a boss and things float down from crazy places.

Amy Tobey: I think you're edging up on the fallacy of control over social systems.

Ward Cunningham: Right, omnissions and adaptive capacity. How do you build adaptive capacity? Reading the error messages that you foolishly created gives you a little bit, but that worked when there was one program that ran one computer. Now the adaptive capacity is at a systemic level that is alive.

Jessica Kerr: Yeah, totally. Whenever I see an error message, that confuses me; I just have to stop and get a better error message in there, right there.

Amy Tobey: Yeah. Once you've worked a few late night calls trying to debug software under pressure, I've noticed that engineers tend to become a lot more picky about log messages each time they work an incident and have to go and read those log messages back.

Jessica Kerr: Yeah. As babies we learn how to speak by doing it. We start out “new exception throw poo,” then I start getting more specific.

Amy Tobey: Oh, like, “Where do I go from that?” We nudged up against adaptive capacity and how we build it. Tim, I know you're pretty well read in this stuff. I want you to kind of bring us a little bit closer to this intersection of how we run our organizations in these hierarchies and grow it. I see what we've been doing for at least the industrial age, for basically ever in human history. But we're at this point where our systems are growing, where the old models of using that hierarchy for control are preventing us from developing adaptive capacity.

Tim Tischler: I love Dr. Wood's paper: A Theory of Graceful Extensibility. I reference it and read it often. It's amazing that within his model, he can describe software systems that are getting hammered by a denial of service attack, human teams and a software organization that are spending too much of their time being interrupt-driven to be able to push things forward, and hospitals during COVID. Like one model describes all of that.

Now that we're starting to get the shape of what a resilience engineer might look like, we can go and find where there are units, be that unit a service, be it a team, be it a full organization like in the Tango-layered network. The connections go in many different levels and many different things can be units, just like systems are social constructs. Where do you draw the boundaries of finding the units that are saturated, or that don't have a large enough perspective on the system to know what they need to do next.

One of the things that I've discovered in the last couple years of being a site reliability champion is: If you can get the right people in the room and describe the problem the right way, it becomes easy. But it has to be all of the right people in the room and the problem has to be framed in the right way. When that happens, they can talk it through and go, “Aha, here's what we need to do. Let's go do it.” Then it's clean enough and crisp enough, the managers go, “Oh yeah, let's prioritize that.” Off we go.

But even if one necessary team member from a service isn't there, where the service is one of the key contributing factors to the problem, then everyone starts going for workarounds or they don't see it clearly enough, because they don't have the full context represented there.

It's almost like you are creating a new unit, a new team. All visibility is local, so create a new unit that's connected in a new way to the associated technical systems space. Then the problem is easily solved with those connections that you've put together.

Ward Cunningham: If I can drift us a little in a dangerous direction. We do a lot of work by video call now, and I've noticed that if you get a small enough audience, there's a little conversation.

It's like, “How are you doing? Are you doing okay?” This idea that with all our privilege, we might not be doing very well, makes it hard to answer. All the things we used to do that we now can’t, which we want to do again. I think that the news is very hard to process. My kids are grown, so we don't have to worry about keeping their lives going, but my wife and I spend a lot of time talking about what we can expect to do, trying to piece things together in a model that makes sense to us. That's complex.

In an incident analysis sort of way, there's a lot of people thinking, “Well, when we come out of this, we want to be a different world than we went into it.” Then we see the issues of diversity, and it is a time where we’ve already agreed that computers touch everything. The computers have touched that in a not particularly positive way. But we really do have an obligation in the coming months to dig into systems that may be outside of our turf, but part of our responsibility as humans seeking to improve our interaction with systems.

Amy Tobey: Are you suggesting that those of us who have had the ability to build up this cognition ability about large systems, have a responsibility to apply that further than we have been?

Ward Cunningham: What I find is that we have a lot of friends who kind of respond: I wish it were this way, so I'm going to assume it is. Logical is an argument that is whole, there's a wholeness to thought there that when you're trying to figure out the computer system and your retrospective, you really do have to look pretty far and wide.

Jessica Kerr: My hope for the software industry for us having an impact on the world besides making computer games, is exactly this that we're just talking about. We do get used to working with systems that are incredibly complex and we can't just wish them away. We can temporarily draw the boundaries where we choose to and say,“Oh, My SLA is really good. It doesn't matter if my ISP goes down and the website's not accessible.

We're faced with reality and we can poke those systems, and we can change them and we can build in that observability so that we don't have the blind spots. Unlike any other science, we can change these systems and learn from them so much faster and get used to looking at them empirically. Then we do, I hope we can also apply that in real life.

Amy Tobey:I really like that. We have this opportunity to bring some of these ideas like observability into these old large systems that were designed before these concepts were really even solidified.

Jessica Kerr: It's the expectation of being able to figure something out. Or just that we can use that in software to do experiments that teach us about complexity, yeah.

Ward Cunningham: There's a feeling that if you try to understand too much, you'll certainly make mistakes and there'll be unintended consequences. Let's take something really simple like: “If I sell you this, will you buy it?” We'll convert everything to a trade monetized in dollars, and then if we're making money, we must be doing good. Well, that's foolish.

Jessica Kerr: If you have money you must be good.

Amy Tobey: Talking about feedback systems, right? That need to eat.

Ward Cunningham: Yeah. Look, I live in an economy, so I have to play by its rules largely, but the rules are flexible as are the incidents we're living through. They're compounding and preparing us to look a little deeper next time. I think that that's going to be an appropriate way to get better at what we do, by looking a little larger and then bringing what we learned back to the things that we can control that we can poke on.

Tim Tischler: And vice versa. One of the amazing things in reading everything Woods and Cooke and Hollnagel et al have written and researched, is the speed at which the tech industry iterates. It is jaw dropping compared to the British Maritime regulations, or the Dutch Rail or the aviation industry. From the moment something gets designed, to when they put it into an aircraft, to when it gets out and over the lifespan of the aircraft, the timeframe is in decades. That's the life span that a lot of the complex systems out in the broader world operate in, whereas in technology, these things just come up and go. The rate of change and the boundaries are so much smaller and they go so fast. We are learning so quickly. What takes weeks and months and years, would previously take people, decades, and lifetimes and generations to learn about how the systems work, which is a huge advantage.

Ward Cunningham: It's an advantage as long as we can keep up with it.

Amy Tobey: Well, we can keep up and we can keep pulling our existing systems up with it. I think that's where America in particular is facing a lot of issues is we just left a lot of tech debt sitting in our social systems.

Tim Tischler: I mean we still write laws every so often that have hard coded values in them. Like the minimum wage is $15. But then what happens in another 30 years when minimum wage is still only $15, rather than coming up with some index and tying it to that, and then changing the index as we learn more to make a more dynamic system?

That can change its shape along with the adaptations that the system is going to make, rather than assume that we will stay where we are right now. The process is a verb. It's always in motion, so to assume that we know something right now, and that it's going to be true for any amount of time, is crazy.

Amy Tobey: There is a sense of inertia there, right? Things that we believe for a long time are, are harder to move.

Jessica Kerr: There are systems that move at different paces and nature moves, changes more slowly than commerce. I think this is Stewart Brand. That's okay in software: that the customer-facing API changes more slowly than the pieces inside.

Our government has a bigger impact than any one particular website. It's going to change more slowly, but it does change. Sometimes it feels like it changes at a pace that is longer than my lifetime, but that's still change and I can still influence it.

Amy Tobey: Yeah. We have to start small. Well, great. We have a comment from the audience: We really highly value reuse, but that reuse is at the cost of a certain level of understanding of our systems.

Tim Tischler: There's also a tension between something that is robust and something that's resilient. As we go into reuse, I make a thing and then give it to y'all and then y'all use it. But wait, it's not quite the right thing for y'all, but I've written all of these tests and done all of this work and building it. Now it's not quite the perfect fit elsewhere, and the cost for anyone else using it to adapt it to their local conditions and constraints is that much harder.

That's been another big ‘aha’ that I've had; I see software engineers building things with tons of tests going for robustness for this one use case, that I am certain this thing is perfectly built for. It will withstand anything that I can imagine today when I'm writing it at this time. But I'm not making it easy to adapt it to new contexts or things that I just haven't thought of yet. That's the trade off between resilience and robustness. Robustness is: We're going to make sure this will work in all of the conditions that we currently know about. But there's also thinking about, can we make this thing quick and easy to adapt because it is going to need to adapt in the future? I don't know enough right now and it's going to change really fast.

Jessica Kerr: One of our audience members also pointed out the knowledge trade off, if his team builds an internal platform. The people who use it don't understand the things that the platform is abstracting over.

As Tim just pointed out, once you put the platform out in the wild and people start using it, the people implementing it lose understanding of how it's being used, because you're not directly connected to all of those people. Well, there's value there for the internal platform. You have some hope of connection to those, but as soon as it's public, you don't know what people are doing with it. There's a loss of knowledge on both sides.

Ward Cunningham: It is an art, and I associate it with language designers. Nobody wants a language designer that can only solve problems that they can imagine. In language design, you try to figure out what thought process you want to support. I'm old enough that recursion was considered a graduate-level problem because all the undergraduates learned Fortran, Fortran does Recursion.

We deal with Recursion, now we know that “Gee, if you allow arbitrary Recursion, your system is going to fail because somebody is going to send you something that recurses you right out a memory.”

You're never really going to get away from the basics, but in a nice environment, you don't have to think about that continuously. We say, “Well, you don't worry about how the object does it.” You just tell the object what you want done.

Finally, after writing some N-cubed algorithms, I said, “Well, that's true when you're reading the code. You shouldn't have to think about what the object that receives a message is going to do.” But when you're writing the code, you really have to know what it has to do, because if you don't think about it, you're going to write crazy algorithms.

It’s like an author or poet that has to think of every possible meaning of a sentence to be able to write something.

Amy Tobey: Well, on that note, I want to thank you everyone. I really love how we got a little bit out of tech a little bit. That was wonderful.

We run our SRE Leaders Panel as a recurring series, so please follow Blameless on Twitter or check out our Events page to stay posted on the next one.

Recommended Reading from the Panelists:

Get similar stories in your inbox weekly, for free

Share this story:

Blameless

Blameless is the industry's first end-to-end SRE platform, empowering teams to optimize the reliability of their systems without sacrificing innovation velocity.

Latest stories

Best Cloud Hosting in the USA

This article explores five notable cloud hosting offers in the USA in a detailed way.

Best Dedicated Hosting in the USA

In this article, we explore 5 of the best dedicated hosting providers in the USA: …

The best tools for bare metal automation that people actually use

Bare metal automation turns slow, error-prone server installs into repeatable, API-driven workflows by combining provisioning, …

HIPAA and PCI DSS Hosting for SMBs: How to Choose the Right Provider

HIPAA protects patient data; PCI DSS protects payment data. Many small and mid-sized businesses now …

The Rise of GPUOps: Where Infrastructure Meets Thermodynamics

GPUs used to be a line item. Now they're the heartbeat of modern infrastructure.

Top Bare-Metal Hosting Providers in the USA

In a cloud-first world, certain workloads still require full control over hardware. High-performance computing, latency-sensitive …

Top 8 Cloud GPU Providers for AI and Machine Learning

As AI and machine learning workloads grow in complexity and scale, the need for powerful, …

How ManageEngine Applications Manager Can Help Overcome Challenges In Kubernetes Monitoring

We tested ManageEngine Applications Manager to monitor different Kubernetes clusters. This post shares our review …

AIOps with Site24x7: Maximizing Efficiency at an Affordable Cost

In this post we'll dive deep into integrating AIOps in your business suing Site24x7 to …

A Review of Zoho ManageEngine

Zoho Corp., formerly known as AdventNet Inc., has established itself as a major player in …