SRE Leaders Panel: Work as Done vs. Work as Imagined

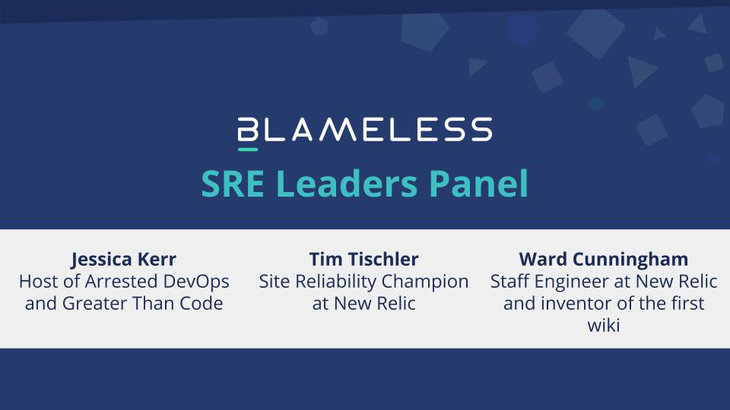

Blameless recently had the privilege of hosting some fantastic leaders in the SRE and resilience community for a panel discussion.

- Ben Rockwood, Head of Site Engineering at Packet and one of the early leading voices in the DevOps movement

- Morgan Schryver, Senior SRE at Netflix where she identifies human and technical factors of reliability

- Rein Henrichs, Principal Software Engineer at Procore and Co-host of Greater Than Code

Our panelists discussed the effects of imposter syndrome especially during high tempo situations, how to use it to our advantage and overcome doubt, and how culture directly affects the availability of our systems.

The transcript below has been lightly edited, and if you’re interested in watching the full panel, you can do so here.

Amy Tobey: Hi, everyone. I'm Amy Tobey and I'm the staff SRE at Blameless. I've been an SRE and DevOps practitioner since before those names existed. Let’s introduce our panelists, alphabetically in order by Twitter handles, starting with Ben.

Ben Rockwood: Hi. I am Ben Rockwood. I am the Director of site engineering at Packet Equinix. Prior to that, I was at Chef for a little over four years doing operations. Prior to that, for about 10 years I was at Joyent, which was one of the first clouds ever to be created back in 2005. Before that, I was a consultant in the valley. I've been doing this way too long.

Morgan Schryver: I'm Morgan Schryver. I'm a senior site reliability engineer on the CORE team at Netflix. Before that, I was a senior SRE at Blizzard on the data platform and a few smaller places. I've probably been doing this less time than a lot of the people on the call. I stumbled into it because I started out as a developer that figured out how to use Linux and a command line, and just got stuck with.

Rein Henrichs: I am Rein Henrichs. I am a principal engineer at a company called Procore if you haven't heard of us, we build software that powers the global construction industry. I have straddled the wall between dev and ops for most of my career.

Amy Tobey: Perfect. A couple of us have had discussions recently about imposter syndrome. I felt like that'd be good to bring up right away. I wanted Rein to say a little bit to start about just how imposter syndrome impacts your ability to move forward in your career and how it impacts your mental health along the way.

Rein Henrichs: As we're talking, my palms are sweaty, my knees are weak, and so on, even though I do a podcast pretty frequently and I'm pretty good at talking about stuff. I will say that the flip side to imposter syndrome is that I never want to feel like I know everything. Feeling that there's more for me to learn is healthy, but also feeling like I'm not good enough to do a thing I've been doing for 12 years gets in the way.

Amy Tobey: I've had a lot of experience with that over the last few years with moving around a little bit and having some self doubt like, “Can I do this? Am I really in the right spot? Am I chasing a career that's beyond me?” I like to think that has turned out untrue.

I wanted to go to Morgan a little bit to talk about what it's like being at the helm of the world's most popular video service.

Morgan Schryver: It's intimidating. When Amy first approached me to do this, I said, "Wow that sounds really cool. You have the other people on there, that's going to be awesome. Why do you want me there exactly? I'm really confused."

A lot of us I think end up feeling like, “How did I get here?” I certainly couldn't have picked this out for myself a few years ago. Even when I started interviewing early last year, there were some things that I considered moon shots that I didn't think were possible to achieve because I just don't know enough. There are so many people with more experience who are more articulate and do this so well that I didn't think I was really qualified.

Then there's been this overwhelming amount of evidence and feedback from people like, “No, you're doing this and this is exactly what you need to be doing, so keep doing it.” It's still intimidating, even with that feedback. It can still feel scary.

Amy Tobey: Thank you. Ben, you hire a lot of people like us and probably talk to more people than any of us do in a given month. I thought I'd take the opportunity to ask you to use your leadership experience to talk about how you see it impacting the people that work for you or that you're trying to hire.

Ben Rockwood: I really value humility and curiosity. I think imposter syndrome is something that is healthy and necessary because it means that your passion is engaged, that you care about what you do; you want to do it well, you want to do it better, and you know that you can be better. It's a driving force that can be very healthy. There's a balance though. You can't allow it to stop you.

If you think about pre-DevOps SRE, you would refer to the person who didn't have imposter syndrome as the gray beard or your operator from hell. Nobody liked that person who knew everything and was unshakeable. Imposter syndrome gets a bad rap. It's a necessary thing that we deal with.

If you think about what we call it in a psychological context, it's really the outplay of the Dunning-Kruger effect. One of the ways imposter syndrome is stimulated is that people who know very little about something go to a website or read the Wikipedia page on some given technical topic. Then they come back and they ask you questions as though they're an expert.

They will frequently ask you a question to which you, feeling like you are an expert or have some level of expertise, don't know the answer to. All of a sudden you're like, "Oh, I'm a fraud. This person actually knows more about it than me.” They absolutely don't.

Software is complex, the world is complex, and scenarios are different. You couldn't possibly have experienced every scenario. You’ve engaged with a tool,product, problem set, or area of study to solve a certain problem. You know it from that direction. You may know it very deeply. You don't know everything.

It only takes one person to ask you about some aspect you haven't considered before, and all of a sudden you're on your hind leg and you feel like a fraud, and you're scrambling to catch up.

If you're in a strong supportive environment, that turns into curiosity and you say, "That's a great question. I haven't done that sort of thing before. Let me go find out and I'll come back." Everyone else says, "Great because you're the expert and we're excited." If you're not in a supportive environment, it comes off as accusatory and it knocks people down. That's why culture is so important to us.

Amy Tobey: It really is. There was another part of imposter syndrome I wanted to touch on. When somebody gets to be what most of us would call a real expert, they tend to answer more cautiously to questions and express a lot more uncertainty.

Rein Henrichs: There's a simple answer to every question, which is “It depends.” It's not a very satisfying answer, but it's often the best answer. I think the flip side of imposter syndrome leading to growth is that it's also a sign of growth. Once you start to see how much there is to learn, you start to get a better idea of your place in the universe. You see who you are in relation to the sum total of human knowledge and it's very intimidating. It'll be very difficult once you start to see this to think you're an expert on anything.

Amy Tobey: When you are responding to incidents in a large infrastructure that a lot of people care about—one that will light Twitter on fire for a few seconds of down time—it puts you in a position of authority and gives you the ability to say things with confidence about that system. But the reality is, when you're dealing with the emergent behavior of that system, it's a lot less clear. So Morgan, I wanted to bring it to you to talk about how your experience in the last couple years at Netflix has changed how you look at your expertise in SRE compared to when you were at Blizzard.

Morgan Schryver: It's definitely been a challenge. Before coming to Netflix, I worked on systems where I had a solid understanding of all the moving parts. You could page me for pretty much anything in different parts of the infrastructure. At previous jobs, I could probably solve an issue or at least get you on the right track and get the right people in the room.

When you come to somewhere like Netflix that has such a sprawling infrastructure with such history and depth behind it, you recognize that you will never understand this system in fullness. There are people who have been on the team for years that are still learning about new things constantly. It makes you feel a little better about it, but it also forces a very difficult mentality shift in responding to incidents.

I was so used to getting paged and investigating it, fixing it, and maybe putting in a ticket afterwards because someone might be interested in knowing what's going on. That would be the extent of it. People weren't really interested beyond that. You just fix it and you let people know and that's that.

When it comes to doing incident response at Netflix, I rely on other people. I rely on other teams and their expertise. They rely on me being succinct, confident, and quick at owning and directing situations. We call it incident commander a lot and it does actually feel that the role has to embrace a lot of those qualities.

It's been difficult for someone like me, who has a severe case of imposter syndrome, to make these almost absolute statements. I want to have all of the information I think I might need to be absolute when I make that statement, but I've had to let that go. It’s not possible. It's been reinforced by my teammates and by the rest of the company.

I've been in that position where I was a commander of an incident that made the news. That was scary, but the next day and even that same day, I was in a room with the rest of my team and we were talking about all the things we thought we did great, and the ways we felt good about how we responded. We had a constructive conversation about what we could have done better, how we could improve. But it was based on this foundation of, “So we did this pretty well.”

It's been a long, hard transition, but I've had the support and psychological safety to know that I'm going to be wrong in an ops channel with over a thousand people in it occasionally. That has to be okay.

Amy Tobey: I love that you pulled out that cultural element of support and safety. This feels like a good transition to Ben who mentioned culture earlier. I wonder if you want to talk a little bit about how culture contributes to the availability of our systems.

Ben Rockwood: Culture is fundamental, and psychological safety is definitely a key aspect. Let me put it into a scenario. You're an engineer. You're working late one night. You're digging into a problem and you notice something is broken. Nobody else knows, there are no alerts, but somethings broken and you should go and hit that bell to wake people up to deal with it. What do you do?

The way that you handle that is going to be based on your personality and the culture at large. Are you going to get yelled at? Is there going to be finger pointing and blame? Or are people going to rally and thank you for doing the right thing? That's a scenario that happens all the time.

There are plenty of people who believe if it ain't broke, don't fix it. They use some of these old adages to fall back on, and it leads to a fragile infrastructure, or things getting thrown in the back log. People don't take ownership because they don't want to be the throat choke.

Almost any environment where somebody uses that expression, "What's the throat to choke?" is just dangerous culture. Anybody should be able to speak up at any time, share their feelings, their findings, and it should be viewed not as they found it so they own it, but rather, they found it and we're thankful to them. Now it's a team responsibility. We should all come together and be discerning and careful about how we approach it.

Amy Tobey: I want to delve into where those adages come from and how they get reinforced because we've all run into that in our careers.

Rein Henrichs: There are a lot of directions I could take this in. We're talking about culture and psychological safety, and that can be difficult because these are very abstract, nebulous constructs. There isn't a dial that you can turn on an organization that increases psychological safety.

Martha Acosta points out that there are four levers that leaders have access to that do impact culture. There are social norms such as what we reward and sanction as well as how we treat each other, which we can impact by modeling certain behaviors. There are roles and hierarchies, such as how we define what people do. Then there are rules, policies, and procedures, and lastly metrics and incentives. We have these levers that can influence but not control culture.

So when we talk about blaming, blaming is a product, an emergent behavior that comes from culture. It's ideals, beliefs, how people relate to each other, and so on. If you want to get a handle on blaming, I think you have to look at what you can do with those levers first. Modeling behaviors I think is very effective. As to where these come from, where these behaviors arise, there's a book that argues that the brains are hardwired to blame. I'm not sure that that's the case.

It seems like blame is just one form of a general cognition, but it does seem like blame has adaptive qualities. So blame is actually effective in regulating social behavior. When I see someone doing something wrong and I tell them, the way I did that is by blaming. That was appropriate and adaptive. Where it gets us into trouble is when we miss apply it, or when we over-sanction it. We blame someone more than they deserve.

Amy Tobey: Or stop too soon, right? The idea of “there's no human error” can stop the investigation too soon.

Netflix's culture is really famous for some of these very intentional design decisions. In particular, the incentive part is largely taken care of, but the feedback system is one of the ways that they regulate where attribution of blame is as part of that cycle.

Morgan Schryver: That was actually one of the attractive things about Netflix to me. It's because for so long I had operated in a void. A lot of the time you don't have anyone who understands what you're doing or knows the system you're working with. There's this lack of feedback where, while unfettered—you can do whatever you want and learn what you want—you don't really have anyone checking on you or helping you improve.

Netflix having feedback as a main tenant of the culture was really attractive to me. That has manifested in a lot of ways and it's especially important for that to be the culture from the top down.

I've mentioned I've had incidents where I didn't feel I did perfect with it. I was in the news. What helped was the day after, when the incident wasn't even fully solved, I scheduled a meeting time with a few of the senior members to get feedback. I had the confidence that those people would make the time and would give me honest, constructive feedback that would help me improve. They call it the gift of feedback here, and it's very true. It is part of their job to point these things out to me.

That is how I was able to adapt. I got feedback that I need to really own incidents more and be more decisive and clear in my communication. I had this proclivity of diving in and looking at metrics and dashboards to really make sure I know exactly what I'm saying before I say it. I got feedback that this is not what I need to be doing. My job is to coordinate the efforts of people who can know that system and effectively make decisions on the local level.

Amy Tobey: I really like that interlock between the constant feedback, but also how it's incentivized on a regular basis. Ben, what do you do in your organization in terms of incentivizing feedback and creating these positive feedback loops?

Ben Rockwood: We do a lot of team communication and make sure that it's a team responsibility, that it's not on any single person’s shoulders. That takes a lot of the pressure off and allows people to work quickly and freely.

View incident management as an example of this iterative process. Let's eliminate what we know and don't know, start drilling in, break up the team, and let's work together so that it's a real team activity. It is really easy as an incident commander to get bogged down with checking on the details. You definitely don't have time to do that and that's not your responsibility. You have people who can do that and you've got to lean on them to go out and grab all the information and bring it back to the team.

It’s also important to have that strong postmortem activity where you can bring as many people together as possible to make sure that you feel that it's a shared organizational opportunity and isn't falling onto anyone's shoulders.

We ask, “How could we have individually responded better? What changes could we make to each of our roles and how we function to improve the process?”

One thing I wanted to bring up is the most common form of blaming that arises in these sorts of things is the retroactive: why didn't we blank?

A lot of times I think we think about blameless as being we don't say, "Bob, it was your fault," or "Bob, you did this." But a lot of times, the blame is on the backlog. We knew that this was a thing, so why didn't we do it? It's always tempting to go back through and prune our backlog to determine, “Why is it that we knew it was an issue and now it's come up?” It’s impossible to know that, of all the hundreds of things in our backlog, that was the one we should have done because it could have been any of the others. There's a number of ways that we come back around to blaming. I think that the meta narrative here is that any time we go from an organizational responsibility to an individual responsibility, we set ourselves up for failure.

Rein Henrichs: I really like what Ben said about the hundred things in the backlog. But the question is, when we made the decisions, what was on our minds? If you start from the assumption that everyone is doing the best they can, then clearly we're prioritizing a hundred different things and other things are higher priority. In addition to shifting attention away from the individual and onto the system, I think it's also good to try to do what Dekker talks about which is get in the tunnel with the worker. Figure out what the event looked like. That's the only way you can understand why they made the decisions they made.

Amy Tobey: That brings us back to engaging your experts as a coordinator in an incident where you're in the tunnel with them, and the way that these experts will blame themselves. Our job as a coordinator is to bring them back out of that and to look at the system.

Morgan Schryver: I think that a huge benefit at Netflix is that teams operate under the service ownership model. They build it. They ship it. They operate it. They maintain it. Every single engineer that starts at Netflix goes through an on-call course that talks about what on-call looks like and some of the expectations. That sense of ownership is instilled early on and you can see it, especially in the complex failures, where there's this huge pressure placed on individuals and teams.

I've seen teams that have really kicked themselves over something. It's very easy for someone in my position to say, "Oh, that happens. Here are some of the factors going into it," but for someone who gets paged in the middle of the night for a service that isn't normally on the front lines of a lot of these outages, it's very easy to think, "Well this is my fault. I've screwed this all up."

I think that's part of that incident commander role and following up. You can't just make sure you take care of action items or things to fix in the system. You have to look after the people too. You have to make sure that they are confident in engaging with the process, and that they aren't intimidated by it because then you are depriving yourself of those resources. If that person feels bad having come in and contributed and communicated during an incident, the next time that comes up and I need that expertise, they may not be as available or as immediately responsive.

As an interesting anecdote I thought I'd throw out about this pressure and questioning decisions, is it's very interesting to see at Netflix where factors completely outside of the team;s control can sometimes affect the retrospective. For an incident that maybe didn't have a lot of impact, but for some reason received media attention, there's going to be a lot more introspection, questioning, and doubting. I've talked to team members recently who were like, "I really feel like I should have done that evac sooner." None of us in that situation would have immediately done that. But because of the pressure of an external event that someone’s talking about publicly, now you're doubting yourself more.

Amy Tobey: The more expertise these folks tend to have, the more we're likely to be doing that introspection automatically because that's a big part of how we gain expertise. We grow in expertise and we have all this deep knowledge in our vertical in the system, and then something big happens and it causes that wave of doubt to come across. How does that play out in terms of building our expertise and our operational capabilities, and even our adaptive abilities?

Rein Henrichs: I think it's easy to look back on your behavior during an incident in hindsight and find fault in all sorts of things. With the benefit of hindsight and better access to cognitive resources because we're not under this incredible pressure, we think differently about the thing that happened. It's easy to think that we didn't do well because we can compare other alternatives and do this analytic decision making process. The fact of the matter is, is that in the moment during the incident, that's not how you're thinking.

Because you're not making decisions in this analytic mode, you are doing what a client calls recognition primed decision making. You are thinking of a thing that could possibly work and then you're simulating in your mind whether it's a good idea. Then, if it seems to fit your model for a good thing to do, you just do the thing.

Very rarely in the moment are we actually thinking of alternatives and weighing them on a matrix of different factors. That's just not how people do things. So it's easy to think in hindsight, "Oh, my decision was bad because I didn't make the decision this way." But that's just psychologically not how people work in these contexts.

Ben Rockwood: One of the things this causes me to think about is postmortems. Growth is normally associated with a certain amount of pain or discomfort. When these sorts of problems arise, there's a lot of light shone on a system. Things can quickly get out of hand. In fact, something I've frequently seen incidents, is somebody identifies a problem in a system, everyone digs in, and what you find is 30 different problems. You can easily get to a point where you're like, “What was the actual thing that created this incident in the first place? I don't recall anymore. I found all these other things that are sub-optimal.” Investigating and searching for problems is a great learning opportunity. The challenge I have in a lot of incidents is that normally, as soon as the incident is over, all that knowledge just falls to the floor.

To me, in the postmortem process, the most important part is not necessarily what happens and what were the contributing factors. The most important part to me is, what did we learn? And in that moment, what other things did we discover that we hadn't considered before? How can we capture all that learning to feed back into the system so that the entire system can become stronger in the future? Those are opportunities that, in a steady state on a good day, you're just not engaged in that mode of thinking to be able to replicate.

Once the incident is over, in a lot of cases if you have a good heart, you will feel some sense of responsibility. Your heart sinks. That's the moment where all of us wonder why we decided to do this for a living. We're wondering if the 7 Eleven at the end of the street has an opening. But then you come together as a team, and you get through it. You go through that postmortem and at the end, you still have a job. You didn't get fired. Everyone learned something. We have new things to do.

Everyone walks away like, "I love my job and I love doing this," and everything is great. But then you quickly switch contexts since a lot of us aren't on teams that handle only incident response. As soon as the incident’s over, we're back to future work. We're thinking all the things on the road map. We're not thinking about necessarily handing all the back work system improvement. Trying to find that balance in the day is always a challenge.

Morgan Schryver: When I hop in and I'm responding to an incident, in that moment there's this rush of adrenaline. That's when I usually feel the best at my job. For whatever psychological reason, I think there's certain people in the industry similar to me who enjoy whatever the nerd version of adrenaline junky is. Getting paged and doing my job makes me feel better. Now when it comes to hindsight and thinking about where I wasn't effective, that imposter syndrome creeps right back in.

Amy Tobey: I remember from your talk, Rein, at Redeploy that you spoke about introspection. There's the adrenaline pop and then, as Ben mentioned earlier, as you're going through and looking for stuff and you discover these other dark corners, you get that little pop of dopamine when you think you might have found a solution. But often this isn’t the solution. You’ve got to keep going, right?

Rein Henrichs: The thing that happens with our adrenaline during an incident is that our limbic system gets engaged. These fight or flight parts of our brain are engaged. It's very difficult to think analytically, to think deliberatively. So if you're familiar with Daniel Kahneman’s book “Thinking, Fast and Slow,” system one thinking is performance-focused fast thinking, and system two thinking is slower more deliberative thinking. Once you engage system one, system two takes a back seat. It's not true that these are completely separate systems that don't interact, but once you've got the adrenaline flowing, it is very difficult in the moment to be deliberative.

A lot of what you're doing is responding to impulses, responding to these affective, value-laden evaluations, a lot of which are feelings. Did you think deliberately about which metrics, what part of the dashboard to look at? Maybe, but more likely you had this instinct that said, "Oh I should look at this." That's adaptation. There's a reason our brain works that way.

Amy Tobey: I like that idea of thinking about it in terms of, “What is our in-the-moment thinking?”

Rein Henrichs: If you want to understand how to get better at incident response, you need to understand these things. You need to understand how brains work, or rather how minds work, because minds aren't just brains. You need to understand how cognition works in these circumstances. You need to build systems that co-evolve with that in mind. It's not enough to look back and talk about what people should've done. In the future, they still won't do it.

Ben Rockwood: The point about the limbic system is really important. This goes back to that meta point of culture. When we're in a stressful situation, all our brains want to do is whatever is necessary to make the pain stop.

We do have some control within an organization about where that pain comes from. Is the pain that the system is down? Or is the pain fear of an individual? Are you really focused on bringing the system back up or are you trying to make your boss, some other member of the organization, or one of your team members happy? Who's the source of the pain that we're responding to?

It’s important when we look at retros to bring out individual feedback with team coaching. In a lot of organizations, we want to say that we're concerned about availability. I think in a lot of organizations, the truth is you're not actually concerned about the system availability at all. In the heat of the moment, you're concerned about this individual, or that individual, or CNN, or whomever. We have some control in our culture as to how we channel that.

Amy Tobey: How do you go about that with your teams? I imagine there's a large leadership component into setting up the environment for people to respond with their full emotional capacity?

Ben Rockwood: I like to make it part of my coaching regiment, and just make sure that when we look back at things that have happened, that we draw out people’s core motivations. What was really driving you in the moment? What were you thinking about? Is that a healthy response or an unhealthy response? Is this something you need to change in your thinking that we can work on together? Or is it something that's an endemic problem in the organization that we need to change culturally?

Again, bring it back to the team. We need to make sure that we're always doing whatever's necessary to act not as individuals, but as a tribe.

Rein Henrichs: Ben, I really like what you were saying about the signal that people are having is pain. Martha Acosta talks a lot about the importance of pain as an organizational signal because pain does two things for us. First, it shows us something that needs to change. Second, it shows us that there's an availability of learning.

These pain signals are things that, if we dig into them more deeply, we see they're opportunities to learn. Also, I would argue that if people in the organization are in pain and you don't care about that, then you're doing them a disservice.

Amy Tobey: I think most of us are working in organizations that tend to zoom in on that really quickly. I'm experiencing this pain and it gets signaled. But I've also worked at places where saying that was not a great idea.

Rein Henrichs: What's interesting to look at is what happens to that signal. Where does it go? Where does it stop? How quickly does it get there?

Amy Tobey: Absolutely. A lot of what we do with the postmortem process is provide a grounding plane for it to be fed back into the system.

Rein Henrichs: Pain goes right to the top in terms of the way our nervous system responds, and it engages us immediately. It shuts everything else out.

Amy Tobey: Then we need either social engagement or space to return back to that higher reasoning part of our minds, right?

Rein Henrichs: Right. Also, if the CEO is the brain of the firm, then where are your pain signals going and at what level do they need to be dealt with?

Ben Rockwood: This is where it feeds right back into imposter syndrome. If there is a latent belief inside of you, real or imaginary, that you are insufficient, in that moment, are you going to fight or you going to fly? You're going to fly. You're immediately going to fall right back into that position of, “I shouldn't be doing this. I'm a failure. It's all me.”

What you're going to look to do is to remove yourself from the situation as quickly as possible. It may turn out that even though you feel like you're an imposter, you have the most knowledge about the system at the moment. That is not the moment we need you to disengage and run away. That's the moment we need to prop you up and bring you together.

It’s important to be open and vulnerable as a team so that we understand when other team members might have that reaction. At this moment, let's come around and rally them. It's really important, that team building and support of each other.

Amy Tobey: That's backed up with polyvagal theory, where if we're in the fight or flight part of our nervous system, we can regulate that either through social engagement with our peers or herd. I notice in the comments somebody brought up, the idea that the pain goes up into the organization, gets absorbed at some level and then stops. It creates that frustration because I've engaged. I've been vulnerable and shown my pain to my leadership tree and then nothing ever happens.

Ben Rockwood: We're always in a source of pain. It's just a matter of which is the pain that's louder. It’s like a sore thumb; it's bugging you, and then you get a paper cut. All of a sudden your thumb is not bothering you anymore because all you're feeling is your paper cut.

Very frequently, this is what happens at organizations as soon as an incident is over. During the incident, the most acute thing in the world is this problem. Why didn't we fix it? What do we need to do and how do we get back up? As soon as it's over, we replace that pain. So it's important to realize that there are all these stresses. They're always competing, and it's very hard to see beyond the top one, two, or three sources of pain to all the other ones that are beneath it.

Amy Tobey: Absolutely. With that, we did get a couple questions. The first one I want to start with is from Benny Johnson who asked, “In a blameless postmortem do you ask, ‘how do we ensure this doesn't happen again?’ If so, how do you limit those actions to stop gold plating?”

Morgan Schryver: One of the things that I think has been useful so far with my time at Netflix, is to have excellent people on the team like Jessica DaVita, Ryan Kitchens, and Paul Reed who have been leading a huge push to understand and maybe restructure a lot of what we look at as incident reviews and how we ask those questions.

They break it down into, “Well, let’s have a technical look at what failed and how. But let’s also look at the humans and the interactions, and what they were seeing and thinking.” I think that distinction is important because the question of, “How do we prevent this from happening again?” spans both of those.

It's very hard to really effectively cover both. So when you're asking, “How do we prevent this in the future?” it’s like Rein said before, it depends. It depends on what trade offs you're willing to make, what investments you're willing to make, what priorities you're willing to go with. That's always a really difficult question to answer.

After an incident review, I like to see a greater understanding of the system, the process, and what happened. Maybe there are concrete items. Maybe there aren't. Maybe there are things that people agree are priorities to address and fix, and it fits into their schedule and budget.

But, as has been covered, a lot of times once that pain is gone, those priorities don't exist. So it's very hard to determine exactly what will prevent that happening in the future because you're also only focusing on one thing. If you are committing to fixing this one thing, and patching the one thing over and over again, you're probably never going to get around to addressing the larger problems or maybe more systemic risks that crop up everywhere in your system and organization.

Rein Henrichs: I think there's also a danger in focusing on how we prevent this from happening again. If you successfully prevent every incident from reoccurring, does that mean you won't have any more incidents? I don't think it does. There also has to be a focus on how we get better at responding to the next one which won't be quite like this. It might rhyme, but it won't repeat.

Ben Rockwood: Pain in our bodies is our monitoring system. When somebody's bending our arm, it hurts more, and more, and more the closer it gets to actually breaking. This stimulates us to take action to prevent the actual final incident of the broken arm.

In our technical systems, these are how our monitoring systems function. One of the most important things for me in postmortems is looking at the contributing factors and how we could have had better foresight as to the pressure or problems in these contributing factors.

The pain in the organization of responding to an alert is different than the pain of responding to an outage. So how can we just make sure we’re learning about our blind spots where problems accumulate over time? Because no incident ever has a single cause. There are always contributing factors, usually several contributing factors that then conspire together to a point where it's untenable.

Improve the nervous system of the organization to sense those things earlier and be able to respond to the inputs rather than the final outcome.

We run our SRE Leaders Panel as a recurring series, so please follow Blameless on Twitter or check out our Events page to stay posted on the next one.

Recommended Reading from the Panelists:

- Martha Acosta on Systems Safety and Pain

- https://en.m.wikipedia.org/wiki/Viable_system_model (specifically algedonic signals)

- Gary Klein's Sources of Power

Originally published on Failure is Inevitable.

Get similar stories in your inbox weekly, for free

Share this story:

Blameless

Blameless is the industry's first end-to-end SRE platform, empowering teams to optimize the reliability of their systems without sacrificing innovation velocity.

Latest stories

Best Cloud Hosting in the USA

This article explores five notable cloud hosting offers in the USA in a detailed way.

Best Dedicated Hosting in the USA

In this article, we explore 5 of the best dedicated hosting providers in the USA: …

The best tools for bare metal automation that people actually use

Bare metal automation turns slow, error-prone server installs into repeatable, API-driven workflows by combining provisioning, …

HIPAA and PCI DSS Hosting for SMBs: How to Choose the Right Provider

HIPAA protects patient data; PCI DSS protects payment data. Many small and mid-sized businesses now …

The Rise of GPUOps: Where Infrastructure Meets Thermodynamics

GPUs used to be a line item. Now they're the heartbeat of modern infrastructure.

Top Bare-Metal Hosting Providers in the USA

In a cloud-first world, certain workloads still require full control over hardware. High-performance computing, latency-sensitive …

Top 8 Cloud GPU Providers for AI and Machine Learning

As AI and machine learning workloads grow in complexity and scale, the need for powerful, …

How ManageEngine Applications Manager Can Help Overcome Challenges In Kubernetes Monitoring

We tested ManageEngine Applications Manager to monitor different Kubernetes clusters. This post shares our review …

AIOps with Site24x7: Maximizing Efficiency at an Affordable Cost

In this post we'll dive deep into integrating AIOps in your business suing Site24x7 to …

A Review of Zoho ManageEngine

Zoho Corp., formerly known as AdventNet Inc., has established itself as a major player in …