You have Kubernetes now what? Single or Multi Cluster? A mini guide

Prologue

Let's say you have been running a Kubernetes Cluster in production for a year. And at the scale you've been running it, it worked well for you. However as you onboard more teams and have diffferent kinds of application you realized that you are at a cross roads.

Things started to unravel when you realized that some of the requirements are not easily accomodated with your existing Cluster Setup.

Some teams wanted to add statefulness to their services. Some wanted to run huge pods order of magnitude than what you are accustomed to. And some teams wanted to be able to use elevated level privileges in their pods. How would you accomodate this growing list of requirement in your Cluster?

Assuming you have a fairly stateless cluster with standardized nodes for Memory Bound Applications.

Plotting the requirements against your capabilities you came up with this table.

| Requirements | Team | Can we support it? |

|---|---|---|

| Statefulness of Pods (Statefulsets) | Database Team / Storage Team | No, not at the current setup |

| Big Pods | HPC Team | Maybe but we have to rewrite the helm charts and move to an even bigger node for the entire cluster |

| Lots of Pods | Microservices Team | Yes, but we need to allow for Cluster Autoscaler with Higher Max. Which is harder to manage from a cost point of view |

| High CPU Usage Pods | Security / Crypto Team | Yes. we need to allocate CPU and Memory limits though otherwise we might have a noisy neighbor problem. Also create a new set of nodegroups / nodepools and taint that to be used for high CPU workloads. |

| Completely left field unknown usecase | Unknown Team | Probably? |

| No security boundary pods for Observability | Observability Team | Yes, but we probably need not to put this in the same cluster? or we will get some issues from security team. |

| Airflow Kubernetes Setup for ETLs | Data Ingestion Team | Ahm.. as a cloud infra engineer. I have no idea what this is....? |

Dilemma

What do we do? Shall we create a new cluster for each one of this requirements? Shall we create a new cluster per each team and each environment?

Well yes that is one way to do it. One extreme way. Imagine what you have to do setup a new cluster per each of this.

Setup a new Cluster per Env / Team

- Kubernetes Lifecycle Management

- Logging Aggregation

- Metrics Management

- Network Connectivity

- Load Balancer and Ingress Controllers

- Helm Chart / Kustomize Deployment Pipelines

And yes you can reuse some of those or most of those. But the problem is not in the initial setup. The bulk of the effort will be fixing day 2 operations once those clusters are up and running. Things like.

Day 2 Operations for Clusters

- Kubernetes Upgrades

- Vulnerability Management for Images

- Cost Management (Very Inefficient Nodes)

- Patching Underlying Nodes (Applicable on self managed Clusters)

- Alerting and Alarming

So if we make many small clusters we will face all these Day 2 issues? What do we do then? We know putting all of the requirements in a single Cluster is a disaster waiting to happen. Inevitably as we add more and more capabilities, scale to the single cluster we will be facing diminishing returns.

Answer!

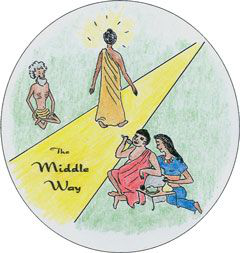

What's the answer then? Well like most things in life the 'mostly' right answer is somewhere in the middle.

What is the 'middle'?

There is a choice between

- a single cluster for everything

- a cluster per single team, per single environment, per single usecase

The obvious answer is let's create a cluster per environment.

If that solved your problem congratulations!

If thats not a sufficient answer because your requirements are complex read on!

So we created an cluster per environment we still can't solve some requirements. What now?

What comes next is a robust assessment of where your company is and where you want to go. After all like the old adage says. "There is no silver bullets"

A few factors based on where your roadmap is going

- How many times do you think you have to do an assessment of a usecase?

- Is going through a process better for you? or will a team consult work?

- How much tech debt do you have in your cluster? Is there going to be a refactor or a migration?

If your roadmap states that you might not need a robust process:

- Then I suggest you write an internal doc:

- Distribute the tribal knowledge of who's who

- Allow for self service by a simple 5-5-5 document

- 5 minutes for writing hello world and deploying it in your cluster.

- 5 hours for writing a simple app and deploying it in a lower env.

- 5 days for being able to deploy in production.

That's the bare minimum needed. Congratulations!

If you need a process here's my personal take on what a good process should be:

Start with understanding of a good criteria to create a cluster or not?

My personal criteria

| Criteria | Status | Details |

|---|---|---|

| Cost Analysis |

✅Cost Analysis Done and allocated. Cluster Size (M-L) Pods more than 20 ⚠️Cost Analysis Done but no allocation. Cluster Size (VL) Pods number less than 20 ❌Cost Analysis Done but overspend needed Cluster Size (S or Gigantic) Pods number more than 1500 |

Small Size Cluster: 1-5 Nodes Medium Size Cluster: 6-10 Nodes Large Size Cluster: 11-100 Nodes V Large Size Cluster: 101-250 Nodes Gigantic Size Cluster: 250 - 500 Nodes Absurdly Big hard to maintain Cluster: 500+ Nodes Pods: 20 pods is usually good number. Control plane and helper pods at 5 nodes is around ~20 so your workload should be bigger than control plane. Otherwise its not worth it in my opinion :) Control Plane: m4.xlarge * 3 Min Node: m4.2xlarge * 3 Max Node: m4.2xlarge * 12 Region: Ireland Range Cost: $123456 pa. Cost Center: 421 |

| Usecase Analysis |

✅Usecase cannot fit into existing cluster. i.e. stateful ⚠️Usecase can fit into existing cluster but the entire workflow of the cluster needs to be updated. ❌Usecase can fit into existing cluster |

Cluster usecase is unknown/unclear Usecase can fit into an existing cluster |

| Security Analysis |

✅Does not follow baseline (either over or under baseline) ❌Follows Baseline |

Anything impacts the baseline of security compliance needs review. The baseline is defined in this document under Levels of Security |

| Scale Analysis |

✅We cannot fit this into the cluster without bloating the cluster to more than a large cluster ⚠️This will make the existing cluster into a large cluster ❌This will have a trivial impact in scale | Scale currently exceeds an existing setup and cannot be accomodated |

| Operational Analysis |

✅No we need to upskill the existing team for like 6 months or more ⚠️Yeah probably? ❌Yes they can support this | Can your existing team support the operations of this new requirement? |

| Conclusion |

✅More pros than cons ❌More cons than pros |

Cons: * Multi Cluster Costs more Operationally with Operational Overhead depending on tooling * Multi Cluster Costs more Financially with Effective efficiency reduced because pods are not utilizing the nodes heavily * Multi Cluster has more complex networking requirement on cluster to cluster requests * Conway's law can spring in and managing lifecycles of the cluster could be complex (this can be mitigated via a matrix when a cluster should be created) * Migration of Cluster (if needed) could be another operational overhead to be managed (can easily be mitigated by GitOps model) Divergence of tooling and standards per cluster (can be mitigated by a Cluster production readiness) |

The criteria might be roundabout or arbitrary but all it tries to ask is.

Can we live with this being in the same cluster as we have now? If yes then why are we bothering to create another cluster?

Levels of security

Here is my opinionated suggestion of what a good baseline is.

- Baseline level

- CIS hardened Docker image Level 1

- CIS hardened Kubernetes Level 1

- CIS hardened OS level 1

- Turn on Authentication

- Remove anonymous access

- Authorization via RBAC

- SIEM Accounting

- Nonprod should not have access to PII data

If the baseline is too much or too little then consider separating out the workload into another cluster.

Conclusion

Creating a new cluster might not seem like a big deal. But putting more thought into reusing your existing one will pay more in dividends done the road.

If you want to read more about the technical side of things I suggest going through the learnk8s.io blog about the same topic.

https://learnk8s.io/how-many-clusters

Thanks for reading!

Get similar stories in your inbox weekly, for free

Share this story:

Kenichi Shibata, Cloud Solution Architect @ shibata.co.uk

Cloud Solution Architect

Latest stories

Best Cloud Hosting in the USA

This article explores five notable cloud hosting offers in the USA in a detailed way.

Best Dedicated Hosting in the USA

In this article, we explore 5 of the best dedicated hosting providers in the USA: …

The best tools for bare metal automation that people actually use

Bare metal automation turns slow, error-prone server installs into repeatable, API-driven workflows by combining provisioning, …

HIPAA and PCI DSS Hosting for SMBs: How to Choose the Right Provider

HIPAA protects patient data; PCI DSS protects payment data. Many small and mid-sized businesses now …

The Rise of GPUOps: Where Infrastructure Meets Thermodynamics

GPUs used to be a line item. Now they're the heartbeat of modern infrastructure.

Top Bare-Metal Hosting Providers in the USA

In a cloud-first world, certain workloads still require full control over hardware. High-performance computing, latency-sensitive …

Top 8 Cloud GPU Providers for AI and Machine Learning

As AI and machine learning workloads grow in complexity and scale, the need for powerful, …

How ManageEngine Applications Manager Can Help Overcome Challenges In Kubernetes Monitoring

We tested ManageEngine Applications Manager to monitor different Kubernetes clusters. This post shares our review …

AIOps with Site24x7: Maximizing Efficiency at an Affordable Cost

In this post we'll dive deep into integrating AIOps in your business suing Site24x7 to …

A Review of Zoho ManageEngine

Zoho Corp., formerly known as AdventNet Inc., has established itself as a major player in …