Google optimizes Kubernetes with an autopilot feature

Google's making life easier for the cloud-native community

TL;DR

IT Operations has never looked back since Google introduced Kubernetes becoming the benchmark cloud platform for running microservices infrastructure in the cloud. Though very helpful, Google Kubernetes Engine has proven overwhelming over the years. Emphasis on developing the engine has been an important subject too, yielding the GKE Autopilot, now container orchestration has moved levels up with the hands-off feature.

Key Facts

Google spreads absolute control over not just the control plane, the infrastructure too.

Autopilot introduction brings Simplicity and Reduction in workload operations for developers

Most things manually done in GKE are now carried out automatically e.g infrastructure maintenance

Even though the optimization of resources comes at a particular bill, it is only applied to the number of deployed pods

Optimization means a few features have been removed, autonomy-oriented users will not be happy

GKE Autopilot supports stack components selection e.g VMs, Virtual Private Cloud-based public/private network, CSI-based Storage, etc

Details

Kubernetes has been demonstrating a lot of versatility and proficiency around reliability, scalability, and cybersecurity more than enough to persuade 100,000 companies to utilize the Google Kubernetes Engine in the second quarter of 2020 alone since its birth on July 21, 2015. Kubernetes Autopilot is the latest upgrade in Google Cloud, delivering an unimitable platform for Google.

What is GKE Autopilot?

Drew Bradstock, Group Product Manager for Google Kubernetes Engine said the bottom line of autopilot was to amass all the tools Google had for GKE and bring them together with their site reliability engineering teams with technical know-how in running these clusters in production.

Google mentioned Autopilot as a "revolutionary mode of operations for managed Kubernetes that lets you focus on your software while managing the infrastructure." Google envisions that Autopilot will entice more businesses to embrace the container orchestration platform because it simplifies operations "by managing the cluster infrastructure, control plane, and nodes."

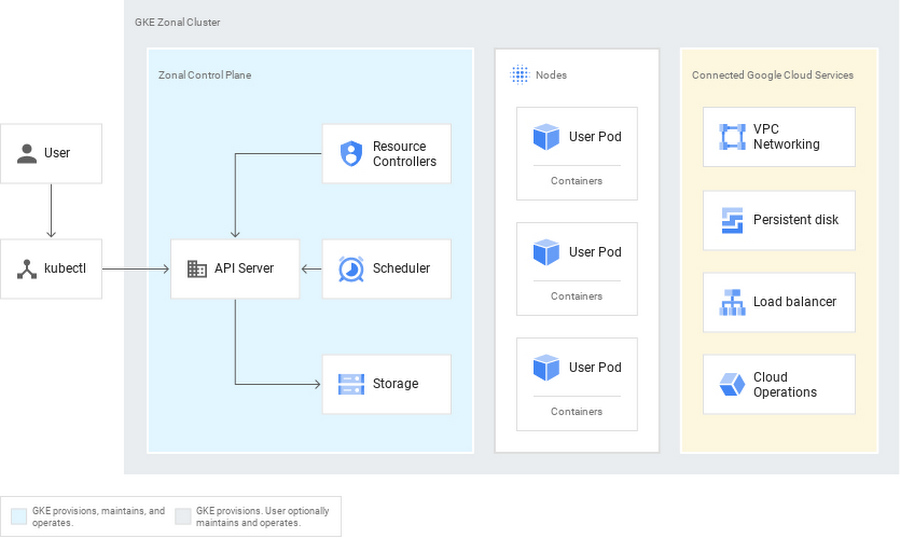

Kubernetes has two components - "the control plane" - solely in charge of overseeing cluster infrastructure and the workloads attached, then "the node" - they infinitely run customer applications packages as containers.

Kubernetes initially had the cloud providers managing the control planes when it first came into light, the worker nodes are essentially virtual machines that have always been available for user access.

Introducing GKE Autopilot, Google intends to manage the entire infrastructure and not just the control plane. It dramatically simplifies the creation of clusters as decisions become narrower and easier to make.

GKE Autopilot has an emphasis on simplification of options for supplying security and top grade in cluster infrastructure. As compared to GKE, GKE Autopilot uses very few knobs and switches in the provisioning of a GKE Autopilot cluster. Several worker nodes and configurations are carried out by GKE Autopilot which also determines the beat class configuration and an ideal fleet size at runtime based on the characteristics of the workload you deployed.

Some companies view their astuteness on Kubernetes as an important factor to get over their competition. With Autopilot, business particularly enjoys Kubernetes more especially owing to the reduction in maintenance and management work.

All of this comes at a price though, but not to worry, billing calculation is not done by the nodes, it's done by a pod. The more the pods deployed, the more the fees. These pods account for the computed memory consumed and storage resources. In addition to the GKE flat fee of $0.10 per hour, there are fees for resources the clusters and pods consume. Google offers a 99.95% SLA for the control plans of its Autopilot clusters and a 99.9% SLA for Autopilot pods in multiple zones.

Due to its automation, GKE Autopilot holds numerous downsides for users that want absolute autonomy, they should stick to the past. Configuring 3rd party storage platforms such as Portworx by Pure Storage or a network policy on Tigera Calico is not supported by GKE Autopilot. Deploying applications from the marketplace and adding nodes with AI accelerators based on GPU or TPU are other missing features on the upgrade.

GKE Autopilot clearly embodies a bigger deal forward in terms of auto-security, auto-scaling, etc. Its easy-to-use nature connotes the difference it brings to the Kubernetes environment. Google has once again moved steps further delivering an industry-first that removes the complexity of running cloud-native workloads.

Get similar news in your inbox weekly, for free

Share this news:

Latest stories

Best Cloud Hosting in the USA

This article explores five notable cloud hosting offers in the USA in a detailed way.

Best Dedicated Hosting in the USA

In this article, we explore 5 of the best dedicated hosting providers in the USA: …

The best tools for bare metal automation that people actually use

Bare metal automation turns slow, error-prone server installs into repeatable, API-driven workflows by combining provisioning, …

HIPAA and PCI DSS Hosting for SMBs: How to Choose the Right Provider

HIPAA protects patient data; PCI DSS protects payment data. Many small and mid-sized businesses now …

The Rise of GPUOps: Where Infrastructure Meets Thermodynamics

GPUs used to be a line item. Now they're the heartbeat of modern infrastructure.

Top Bare-Metal Hosting Providers in the USA

In a cloud-first world, certain workloads still require full control over hardware. High-performance computing, latency-sensitive …

Top 8 Cloud GPU Providers for AI and Machine Learning

As AI and machine learning workloads grow in complexity and scale, the need for powerful, …

How ManageEngine Applications Manager Can Help Overcome Challenges In Kubernetes Monitoring

We tested ManageEngine Applications Manager to monitor different Kubernetes clusters. This post shares our review …

AIOps with Site24x7: Maximizing Efficiency at an Affordable Cost

In this post we'll dive deep into integrating AIOps in your business suing Site24x7 to …

A Review of Zoho ManageEngine

Zoho Corp., formerly known as AdventNet Inc., has established itself as a major player in …